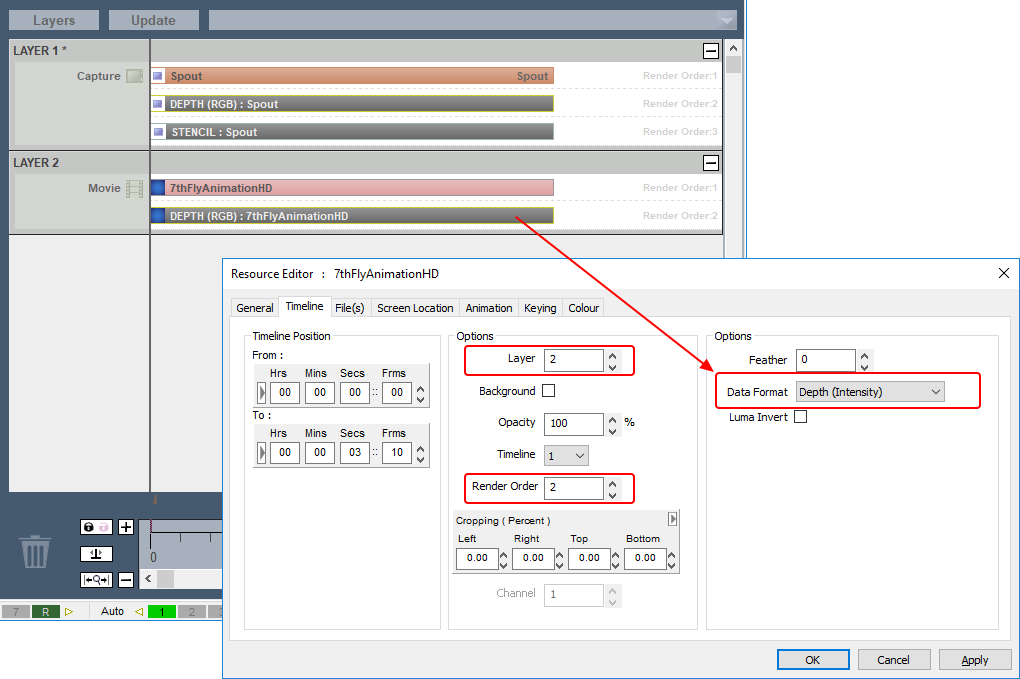

Organise media and control events chronologically for precise synchronisation of video, audio, and visual effects.

Create multiple timelines for dedicated functions and make real-time adjustments for dynamic show programming.

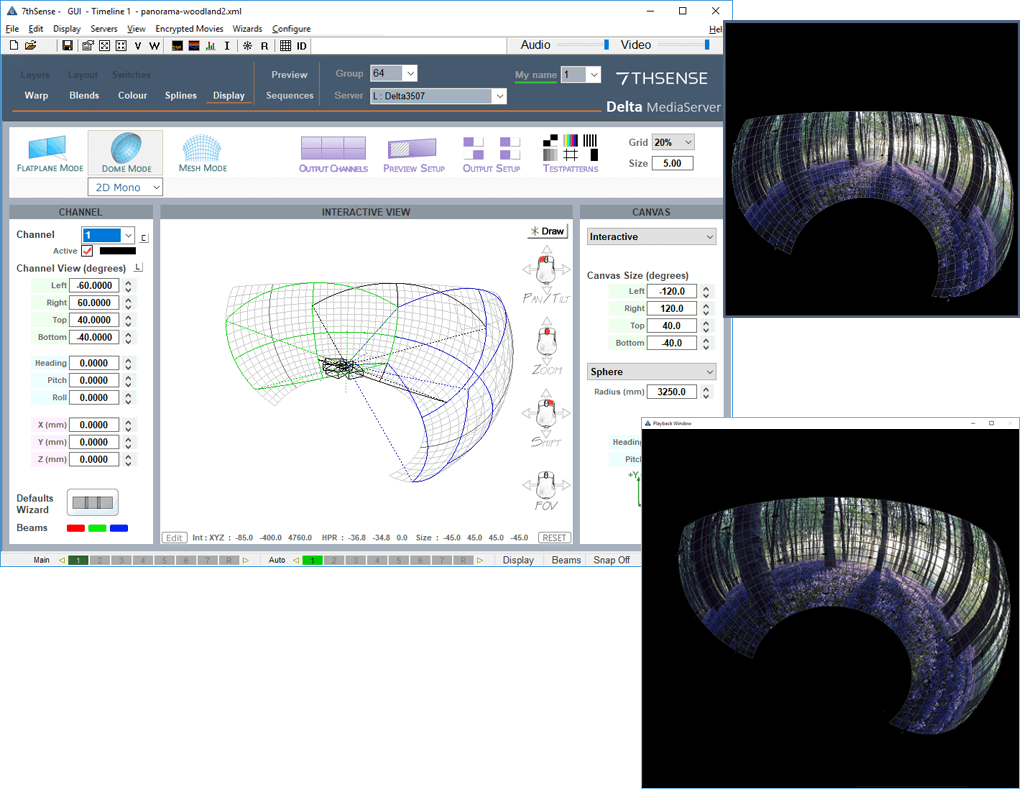

Scale, warp, colour-correct, and blend visuals to ensure image consistency and clarity across multiple display devices.

Unbeatable Performance

Kick it up a Notch®

Our media servers are designed for a wide range of applications, delivering exceptional performance in:

Projection Mapping: Project high-quality visuals onto 3D surfaces like buildings and vehicles, synchronising multiple projectors for seamless displays.

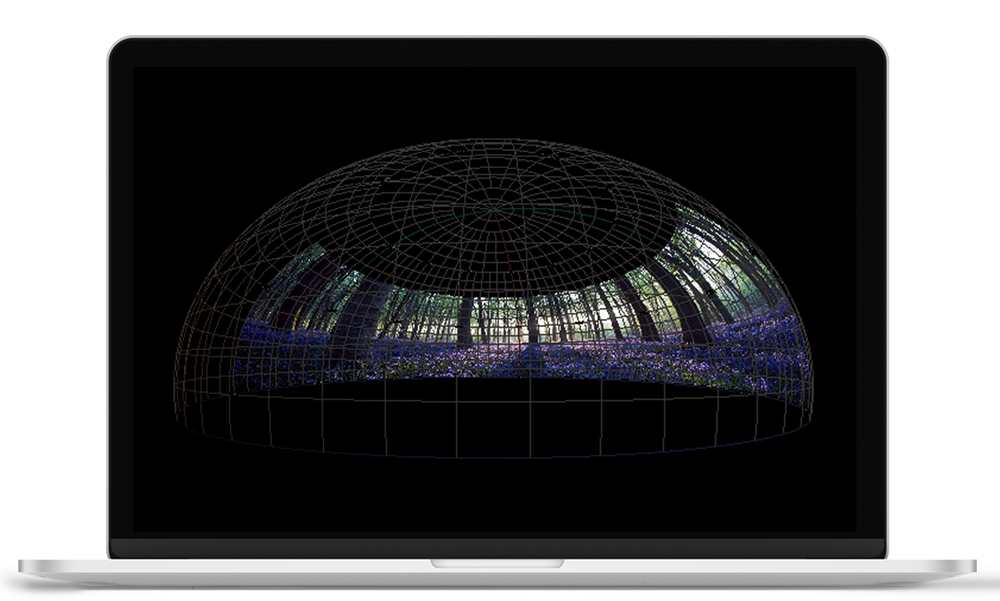

Dome Theatres & Planetariums: Manage 360-degree video and immersive audio for ultra-high-resolution, edge-blended visuals in curved spaces.

Night Time Spectaculars: Synchronise media with lighting, audio, and pyrotechnics for large-scale multimedia events, such as theme park spectaculars.

Dark Rides: Coordinate video, lighting, audio, and animatronics for immersive, interactive experiences in theme park rides.

Retail Displays: Deliver stunning visuals across LED walls or custom setups, elevating brand presence in high-traffic retail spaces.

Museums & Visitor Centers: Create captivating, interactive exhibits with seamless media serving and real-time interactivity.

Mega Canvases & Large-Scale LED Displays: Provide flawless, high-resolution media for massive digital canvases, billboards, and immersive installations.

Cruise Ships & Stadia: Enhance onboard experiences and sports venues with synchronised, high-definition media serving.